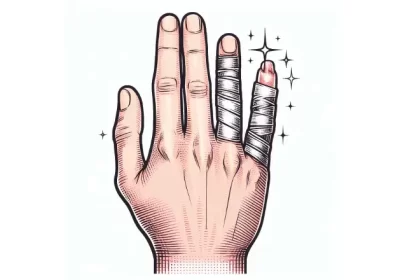

Hands have always been a challenge for artists, and it seems like artificial intelligence (AI) is also struggling to get them right. Although AI can recreate almost anything in a photograph with hyper-realistic features, there is one element it has not yet mastered – hands.

AI-generated images, such as Dall-E and Midjourney, are notorious for adding too many fingers, making the images look like they’re from a nightmare. But why does AI struggle with the number of fingers?

One reason is that in the datasets used to train image synthesizers, humans display their hands less frequently than their faces. “Hands also tend to be much smaller in the source images, as they are rarely visible in their full form,” says a specialist in the field.

2D image generators also struggle to conceptualize the 3D geometry of a hand. Amelia Winger-Bearskin, a professor of AI at the University of Florida, explains that generative AI simply doesn’t understand what a hand is and what its function is. “It just looks at how hands are represented in the images it was trained on,” she adds.

But it’s not just AI that struggles with hands. Many visual artists also have similar problems. In fact, studying hands in art schools is much more complex than studying other parts of the human body. “Da Vinci was actually quite obsessed with hands and did many, many studies on them,” says Winger-Bearskin.

If it’s challenging for humans to draw their own hands, it’s no surprise that AI finds it difficult to understand such a complicated geometry. AI is not yet capable of sorting out 3D features or “thinking” beyond the obvious perspective.

In conclusion, while AI has revolutionized the field of photography, it still has some way to go when it comes to hands. But as AI technology continues to develop, it’s only a matter of time before it masters the art of creating realistic hands in images.